Maintaining with an trade as fast-moving as AI is a tall order. So till an AI can do it for you, right here’s a helpful roundup of the final week’s tales on the earth of machine studying, together with notable analysis and experiments we didn’t cowl on their very own.

If it wasn’t apparent already, the aggressive panorama in AI — notably the subfield often called generative AI — is red-hot. And it’s getting hotter. This week, Dropbox launched its first company enterprise fund, Dropbox Ventures, which the corporate mentioned would concentrate on startups constructing AI-powered merchandise that “form the way forward for work.” To not be outdone, AWS debuted a $100 million program to fund generative AI initiatives spearheaded by its companions and clients.

There’s some huge cash being thrown round within the AI house, to make sure. Salesforce Ventures, Salesforce’s VC division, plans to pour $500 million into startups creating generative AI applied sciences. Workday lately added $250 million to its current VC fund particularly to again AI and machine studying startups. And Accenture and PwC have introduced that they plan to take a position $3 billion and $1 billion, respectively, in AI.

However one wonders whether or not cash is the answer to the AI area’s excellent challenges.

In an enlightening panel throughout a Bloomberg convention in San Francisco this week, Meredith Whittaker, the president of safe messaging app Sign, made the case that the tech underpinning a few of at present’s buzziest AI apps is changing into dangerously opaque. She gave an instance of somebody who walks right into a financial institution and asks for a mortgage.

That particular person could be denied for the mortgage and have “no concept that there’s a system in [the] again in all probability powered by some Microsoft API that decided, primarily based on scraped social media, that I wasn’t creditworthy,” Whittaker mentioned. “I’m by no means going to know [because] there’s no mechanism for me to know this.”

It’s not capital that’s the difficulty. Moderately, it’s the present energy hierarchy, Whittaker says.

“I’ve been on the desk for like, 15 years, 20 years. I’ve been on the desk. Being on the desk with no energy is nothing,” she continued.

In fact, attaining structural change is much more durable than scrounging round for money — notably when the structural change gained’t essentially favor the powers that be. And Whittaker warns what would possibly occur if there isn’t sufficient pushback.

As progress in AI accelerates, the societal impacts additionally speed up, and we’ll proceed heading down a “hype-filled highway towards AI,” she mentioned, “the place that energy is entrenched and naturalized beneath the guise of intelligence and we’re surveilled to the purpose [of having] very, little or no company over our particular person and collective lives.”

That ought to give the trade pause. Whether or not it truly will is one other matter. That’s in all probability one thing that we’ll hear mentioned when she takes the stage at Disrupt in September.

Listed here are the opposite AI headlines of word from the previous few days:

- DeepMind’s AI controls robots: DeepMind says that it has developed an AI mannequin, referred to as RoboCat, that may carry out a variety of duties throughout totally different fashions of robotic arms. That alone isn’t particularly novel. However DeepMind claims that the mannequin is the primary to have the ability to clear up and adapt to a number of duties and achieve this utilizing totally different, real-world robots.

- Robots be taught from YouTube: Talking of robots, CMU Robotics Institute assistant professor Deepak Pathak this week showcased VRB (Imaginative and prescient-Robotics Bridge), an AI system designed to coach robotic programs by watching a recording of a human. The robotic watches for a couple of key items of knowledge, together with contact factors and trajectory, after which makes an attempt to execute the duty.

- Otter will get into the chatbot sport: Computerized transcription service Otter introduced a brand new AI-powered chatbot this week that’ll let individuals ask questions throughout and after a gathering and assist them collaborate with teammates.

- EU requires AI regulation: European regulators are at a crossroads over how AI can be regulated — and in the end used commercially and noncommercially — within the area. This week, the EU’s largest client group, the European Shopper Organisation (BEUC), weighed in with its personal place: Cease dragging your ft, and “launch pressing investigations into the dangers of generative AI” now, it mentioned.

- Vimeo launches AI-powered options: This week, Vimeo introduced a collection of AI-powered instruments designed to assist customers create scripts, report footage utilizing a built-in teleprompter and take away lengthy pauses and undesirable disfluencies like “ahs” and “ums” from the recordings.

- Capital for artificial voices: ElevenLabs, the viral AI-powered platform for creating artificial voices, has raised $19 million in a brand new funding spherical. ElevenLabs picked up steam slightly shortly after its launch in late January. However the publicity hasn’t all the time been optimistic — notably as soon as dangerous actors started to use the platform for their very own ends.

- Turning audio into textual content: Gladia, a French AI startup, has launched a platform that leverages OpenAI’s Whisper transcription mannequin to — through an API — flip any audio into textual content into close to actual time. Gladia guarantees that it might probably transcribe an hour of audio for $0.61, with the transcription course of taking roughly 60 seconds.

- Harness embraces generative AI: Harness, a startup making a toolkit to assist builders function extra effectively, this week injected its platform with a bit of AI. Now, Harness can mechanically resolve construct and deployment failures, discover and repair safety vulnerabilities and make strategies to deliver cloud prices beneath management.

Different machine learnings

This week was CVPR up in Vancouver, Canada, and I want I might have gone as a result of the talks and papers look tremendous fascinating. If you happen to can solely watch one, try Yejin Choi’s keynote concerning the prospects, impossibilities, and paradoxes of AI.

Picture Credit: CVPR/YouTube

The UW professor and MacArthur Genius grant recipient first addressed a couple of sudden limitations of at present’s most succesful fashions. Particularly, GPT-4 is absolutely dangerous at multiplication. It fails to search out the product of two three-digit numbers accurately at a stunning charge, although with a bit of coaxing it might probably get it proper 95% of the time. Why does it matter {that a} language mannequin can’t do math, you ask? As a result of your complete AI market proper now’s predicated on the concept that language fashions generalize effectively to a lot of fascinating duties, together with stuff like doing all your taxes or accounting. Choi’s level was that we must be on the lookout for the restrictions of AI and dealing inward, not vice versa, because it tells us extra about their capabilities.

The opposite elements of her discuss had been equally fascinating and thought-provoking. You’ll be able to watch the entire thing right here.

Rod Brooks, launched as a “slayer of hype,” gave an fascinating historical past of among the core ideas of machine studying — ideas that solely appear new as a result of most individuals making use of them weren’t round once they had been invented! Going again by way of the many years, he touches on McCulloch, Minsky, even Hebb — and reveals how the concepts stayed related effectively past their time. It’s a useful reminder that machine studying is a area standing on the shoulders of giants going again to the postwar period.

Many, many papers had been submitted to and offered at CVPR, and it’s reductive to solely take a look at the award winners, however it is a information roundup, not a complete literature evaluation. So right here’s what the judges on the convention thought was probably the most fascinating:

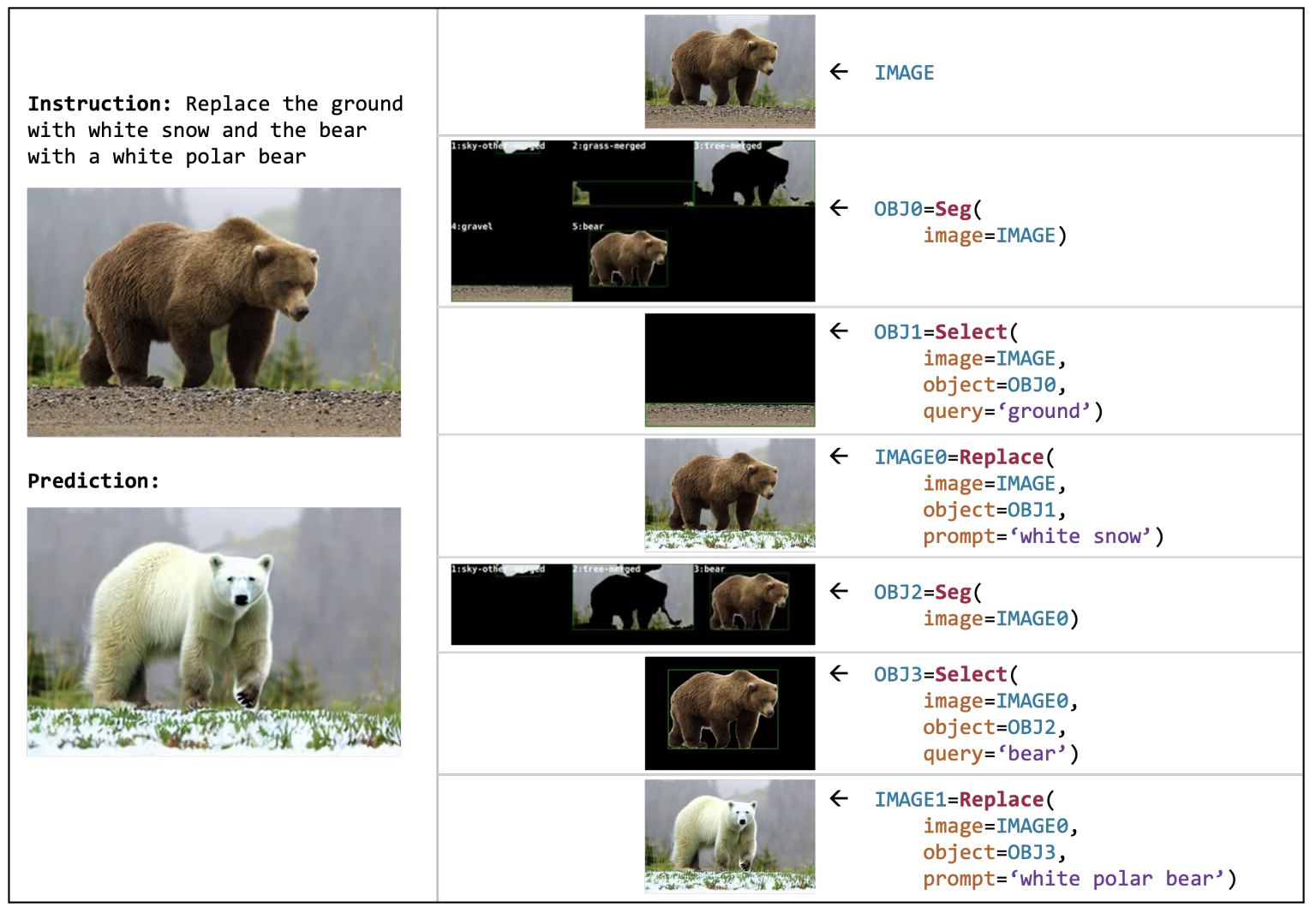

Picture Credit: AI2

VISPROG, from researchers at AI2, is a kind of meta-model that performs advanced visible manipulation duties utilizing a multi-purpose code toolbox. Say you will have an image of a grizzly bear on some grass (as pictured) — you may inform it to only “exchange the bear with a polar bear on snow” and it begins working. It identifies the elements of the picture, separates them visually, searches for and finds or generates an acceptable alternative, and stitches the entire thing again once more intelligently, with no additional prompting wanted on the person’s half. The Blade Runner “improve” interface is beginning to look downright pedestrian. And that’s simply one among its many capabilities.

“Planning-oriented autonomous driving,” from a multi-institutional Chinese language analysis group, makes an attempt to unify the assorted items of the slightly piecemeal method we’ve taken to self-driving vehicles. Ordinarily there’s a kind of stepwise strategy of “notion, prediction, and planning,” every of which could have a lot of sub-tasks (like segmenting folks, figuring out obstacles, and many others). Their mannequin makes an attempt to place all these in a single mannequin, sort of just like the multi-modal fashions we see that may use textual content, audio, or photographs as enter and output. Equally this mannequin simplifies in some methods the advanced inter-dependencies of a contemporary autonomous driving stack.

DynIBaR reveals a high-quality and sturdy methodology of interacting with video utilizing “dynamic Neural Radiance Fields,” or NeRFs. A deep understanding of the objects within the video permits for issues like stabilization, dolly actions, and different stuff you usually don’t anticipate to be attainable as soon as the video has already been recorded. Once more… “improve.” That is undoubtedly the sort of factor that Apple hires you for, after which takes credit score for on the subsequent WWDC.

DreamBooth you could keep in mind from a bit of earlier this 12 months when the mission’s web page went reside. It’s the perfect system but for, there’s no means round saying it, making deepfakes. In fact it’s worthwhile and highly effective to do these sorts of picture operations, to not point out enjoyable, and researchers like these at Google are working to make it extra seamless and sensible. Penalties… later, perhaps.

The very best pupil paper award goes to a way for evaluating and matching meshes, or 3D level clouds — frankly it’s too technical for me to attempt to clarify, however this is a vital functionality for actual world notion and enhancements are welcome. Try the paper right here for examples and extra data.

Simply two extra nuggets: Intel confirmed off this fascinating mannequin, LDM3D, for producing 3D 360 imagery like digital environments. So if you’re within the metaverse and also you say “put us in an overgrown smash within the jungle” it simply creates a recent one on demand.

And Meta launched a voice synthesis software referred to as Voicebox that’s tremendous good at extracting options of voices and replicating them, even when the enter isn’t clear. Often for voice replication you want a great quantity and number of clear voice recordings, however Voicebox does it higher than many others, with much less knowledge (assume like 2 seconds). Fortuitously they’re retaining this genie within the bottle for now. For individuals who assume they may want their voice cloned, try Acapela.